With that black box, it is now easy to draw a 4-bit full adder: A black box for a full adder would look like this: Now we have a piece of functionality called a "full adder." What a computer engineer then does is "black-box" it so that he or she can stop worrying about the details of the component. If you are so inclined, see what you can do to implement this logic with fewer gates. This definitely is not the most efficient way to implement a full adder, but it is extremely easy to understand and trace through the logic using this method. Taking those facts, the following circuit implements a full adder: Similarly, the top 4 bits of CO are behaving like an AND gate with respect to A and B, and the bottom 4 bits behave like an OR gate. If you look at the Q bit, you can see that the top 4 bits are behaving like an XOR gate with respect to A and B, while the bottom 4 bits are behaving like an XNOR gate with respect to A and B. I am going to present one method here that has the benefit of being easy to understand. There are many different ways that you might implement this table. In the next section, we'll look at how a full adder is implemented into a circuit.įull adders can be implemented in a wide variety of ways. Once we have a full adder, then we can string eight of them together to create a byte-wide adder and cascade the carry bit from one adder to the next. The difference between a full adder and the previous adder we looked at is that a full adder accepts an A and a B input plus a carry-in (CI) input. In this case, we need to create only one component: a full binary adder. The easiest solution is to modularize the problem into reusable components and then replicate components.

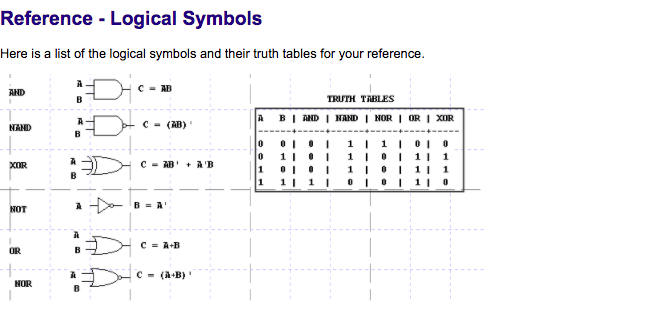

What if you want to add two 8-bit bytes together? This becomes slightly harder. But if you do care, then you might rewrite your equations to always include 2 bits of output, like this:įrom these equations you can form the logic table: 1-bit Adder with Carry-Out A B Q COīy looking at this table you can see that you can implement Q with an XOR gate and CO (carry-out) with an AND gate. If you don't care about carrying (because this is, after all, a 1-bit addition problem), then you can see that you can solve this problem with an XOR gate. In that case, you have that pesky carry bit to worry about. Since there is a well-understood symbol for XOR gates, it is generally easier to think of XOR as a "standard gate" and use it in the same way as AND and OR in circuit diagrams. If you try all four different patterns for A and B and trace them through the circuit, you will find that Q behaves like an XOR gate. The idea behind an XOR gate is, "If either A OR B is 1, but NOT both, Q is 1." The reason why XOR might not be included in a list of gates is because you can implement it easily using the original three gates listed. The final two gates that are sometimes added to the list are the XOR and XNOR gates, also known as "exclusive or" and "exclusive nor" gates, respectively. Here's the basic operation of NAND and NOR gates - you can see they are simply inversions of AND and OR gates: NOR Gate A B Q

If you include these two gates, then the count rises to five. These two gates are simply combinations of an AND or an OR gate with a NOT gate. It is quite common to recognize two others as well: the NAND and the NOR gate. Those are the three basic gates (that's one way to count them). Its basic idea is, "If A is 1 OR B is 1 (or both are 1), then Q is 1." A B Q You read this table row by row, like this: A B Qġ 1 1 If A is 1 AND B is 1, Q is 1. The idea behind an AND gate is, "If A AND B are both 1, then Q should be 1." You can see that behavior in the logic table for the gate. The AND gate performs a logical "and" operation on two inputs, A and B: A B Q The NOT gate has one input called A and one output called Q ("Q" is used for the output because if you used "O," you would easily confuse it with zero).

In this article,we will first discuss simple logic "gates," and then see how to combine them into something useful. The great thing about Boolean logic is that, once you get the hang of things, Boolean logic (or at least the parts you need in order to understand the operations of computers) is outrageously simple. Boolean logic, originally developed by George Boole in the mid 1800s, allows quite a few unexpected things to be mapped into bits and bytes. If you want to understand the answer to this question down at the very core, the first thing you need to understand is something called Boolean logic. How can a "chip" made up of silicon and wires do something that seems like it requires human thought? Now computers do them with apparent ease. Have you ever wondered how a computer can do something like balance a check book, or play chess, or spell-check a document? These are things that, just a few decades ago, only humans could do. Boolean logic affects how computers operate.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed